A gentle introduction to multithreading.

A brief discussion on multi-threading technology

A gentle introduction to multithreading

A brief discussion on multi-threading technology

Approaching the world of concurrency, one step at a time. Approach the world of concurrency step by step.

Modern computers have the ability to perform multiple operations at the same time. Supported by hardware advancements and smarter operating systems, this feature makes your programs run faster, both in terms of speed of execution and responsiveness. 现代计算机具有同时进行多种操作的能力。 Powered by hardware improvements and a smart operating system, this feature makes programs run faster, both in terms of execution speed and responsiveness.

Writing software that takes advantage of such power is fascinating, yet tricky: it requires you to understand what happens under your computer’s hood. In this first episode I’ll try to scratch the surface of threads, one of the tools provided by operating systems to perform this kind of magic. Let’s go! Writing software that takes advantage of this power is fascinating, but also tricky: it requires you to understand what’s going on behind the scenes of your computer. In this article, I will try to scratch the surface of threads, a tool provided by the operating system to perform this magical function. Let’s get started!

Processes and threads: naming things the right way

Processes and Threads: Name them the Right Way

Modern operating systems can run multiple programs at the same time. That’s why you can read this article in your browser (a program) while listening to music on your media player (another program). Each program is known as a process that is being executed. The operating system knows many software tricks to make a process run along with others, as well as taking advantage from the underlying hardware. Either way, the final outcome is that you sense all your programs to be running simultaneously. Modern operating systems can run multiple programs simultaneously.这就是为什么您可以在浏览器(一个程序)中阅读本文,同时在媒体播放器(另一个程序)上听音乐。 Every program is called an executing process.操作系统知道许多软件技巧,可以使进程与其他进程一起运行,也可以利用底层硬件。无论哪种方式,最终的结果是您感觉到所有程序都在同时运行。

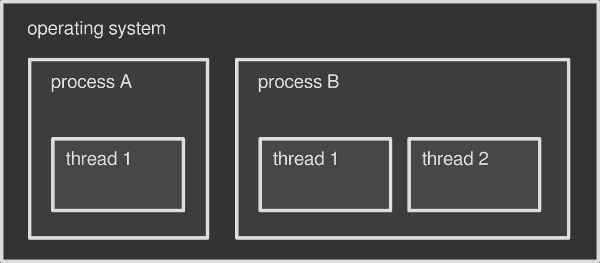

Running processes in an operating system is not the only way to perform several operations at the same time. Each process is able to run simultaneous sub-tasks within itself, called threads. You can think of a thread as a slice of the process itself. Every process triggers at least one thread on startup, which is called the main thread. Then, according to the program/programmer’s needs, additional threads may be started or terminated. Multithreading is about running multiple threads withing a single process. Running processes within an operating system is not the only way to perform multiple operations simultaneously. Each process is capable of running subtasks simultaneously within itself, called threads. You can think of threads as part of the process itself. Each process triggers at least one thread when it starts, called the main thread.然后,根据程序/程序员的需要,可以启动或终止其他线程。多线程是指在一个进程中运行多个线程。

For example, it is likely that your media player runs multiple threads: one for rendering the interface — this is usually the main thread, another one for playing the music and so on. For example, your media player might run multiple threads: one for rendering the interface—this is usually the main thread, another for playing music, and so on.

You can think of the operating system as a container that holds multiple processes, where each process is a container that holds multiple threads. In this article I will focus on threads only, but the whole topic is fascinating and deserves more in-depth analysis in the future. You can think of the operating system as a container with multiple processes, where each process is a container with multiple threads. In this article I will focus only on threads, but the overall topic is fascinating and deserves a more in-depth analysis in the future.

- Operating systems can be seen as a box that contains processes, which in turn contain one or more threads.

The differences between processes and threads

Difference Between Process and Thread

Each process has its own chunk of memory assigned by the operating system. By default that memory cannot be shared with other processes: your browser has no access to the memory assigned to your media player and vice versa. The same thing happens if you run two instances of the same process, that is if you launch your browser twice. The operating system treats each instance as a new process with its own separate portion of memory assigned. So, by default, two or more processes have no way to share data, unless they perform advanced tricks — the so-called inter-process communication (IPC). Each process has a block of memory allocated by the operating system. By default, memory cannot be shared with other processes: the browser cannot access the memory allocated to the media player and vice versa. The same thing happens if you run two instances of the same process, i.e. launch the browser twice. The operating system treats each instance as a new process, allocating its own independent portion of memory. Therefore, by default, two or more processes cannot share data unless they perform an advanced trick called inter-process communication (IPC).Unlike processes, threads share the same chunk of memory assigned to their parent process by the operating system: data in the media player main interface can be easily accessed by the audio engine and vice versa. Therefore is easier for two threads to talk to each other. On top of that, threads are usually lighter than a process: they take less resources and are faster to create, that’s why they are also called lightweight processes. Unlike processes, threads share the same block of memory allocated by the operating system to the parent process: data in the media player’s main interface can be easily accessed by the audio engine, and vice versa. Therefore, the conversation between the two threads is easier. On top of that, threads are generally lighter than processes: they take up fewer resources and are faster to create, which is why they are also called lightweight processes.

Threads are a handy way to make your program perform multiple operations at the same time. Without threads you would have to write one program per task, run them as processes and synchronize them through the operating system. This would be more difficult (IPC is tricky) and slower (processes are heavier than threads). Threads are a convenient way for a program to perform multiple operations simultaneously. Without threads, you would have to write a program for each task, run them as processes, and synchronize them through the operating system. This will be more difficult (IPC is trickier) and slower (processes are heavier than threads).

Green threads, of fibers

Green thread-fiber

Threads mentioned so far are an operating system thing: a process that wants to fire a new thread has to talk to the operating system. Not every platform natively support threads, though. Green threads, also known as fibers are a kind of emulation that makes multithreaded programs work in environments that don’t provide that capability. For example a virtual machine might implement green threads in case the underlying operating system doesn’t have native thread support. The threads mentioned so far are all operating system issues: a process wishing to trigger a new thread must communicate with the operating system. However, not every platform supports threads. Green threads, also known as fibers, are an emulation that enable multi-threaded programs to work in environments that do not provide this functionality. For example, a virtual machine might implement green threads in case the underlying operating system does not support native threads.

Green threads are faster to create and to manage because they completely bypass the operating system, but also have disadvantages. I will write about such topic in one of the next episodes. Green threads are faster to create and manage because they bypass the operating system entirely, but have drawbacks. I will write an article on this topic in the next episode.

The name “green threads” refers to the Green Team at Sun Microsystem that designed the original Java thread library in the 90s. Today Java no longer makes use of green threads: they switched to native ones back in 2000. Some other programming languages — Go, Haskell or Rust to name a few — implement equivalents of green threads instead of native ones. The name “Green Threads” was coined by Sun Microsystem’s Green Team, who designed the original Java threading library in the 1990s. Today, Java no longer uses green threads: they switched to native threads back in 2000. Some other programming languages - Go, Haskell or Rust to name a few - implement equivalents of green threads instead of native threads.

What threads are used for

What are threads used for?

Why should a process employ multiple threads? As I mentioned before, doing things in parallel greatly speed up things. Say you are about to render a movie in your movie editor. The editor could be smart enough to spread the rendering operation across multiple threads, where each thread processes a chunk of the final movie. So if with one thread the task would take, say, one hour, with two threads it would take 30 minutes; with four threads 15 minutes, and so on. Why should a process use multiple threads? As I mentioned before, parallel processing can speed things up significantly. Let’s say you want to render a movie in a movie editor. The editor can be smart enough to spread the rendering operations across multiple threads, where each thread handles a portion of the final movie. So if a task takes an hour with one thread, 30 minutes with two threads; 15 minutes with four threads, and so on.

Is it really that simple? There are three important points to consider: Is it really that simple? There are three points to consider:

-

not every program needs to be multithreaded. If your app performs sequential operations or often waits on the user to do something, multithreading might not be that beneficial; Not every program needs multi-threading. If your application performs sequential operations, or frequently waits for the user to perform certain actions, multithreading may not be as beneficial;

-

you just don’t throw more threads to an application to make it run faster: each sub-task has to be thought and designed carefully to perform parallel operations; You don’t need to throw more threads at the application to make it run faster: each subtask must be carefully thought out and designed to perform parallel operations;

-

it is not 100% guaranteed that threads will perform their operations truly in parallel, that is at the same time: it really depends on the underlying hardware. There is no 100% guarantee that threads will actually perform their operations in parallel, that is, at the same time: it really depends on the underlying hardware.

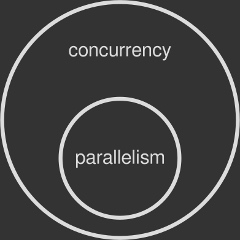

The last one is crucial: if your computer doesn’t support multiple operations at the same time, the operating system has to fake them. We will see how in a minute. For now let’s think of concurrency as the perception of having tasks that run at the same time, while true parallelism as tasks that literally run at the same time.

This last point is important: if your computer can’t support multiple operations at the same time, the operating system has to fake them. We’ll see in a moment. Now, let’s think of concurrency as the feeling of running tasks simultaneously, and true parallelism as tasks running simultaneously. 2. Parallelism is a subset of concurrency. Parallelism is a subset of concurrency.

2. Parallelism is a subset of concurrency. Parallelism is a subset of concurrency.

What makes concurrency and parallelism possible

What makes concurrency and parallelism possible

The central processing unit (CPU) in your computer does the hard work of running programs. It is made of several parts, the main one being the so-called core: that’s where computations are actually performed. A core is capable of running only one operation at a time. The central processing unit (CPU) in your computer is responsible for running programs. It consists of several parts, the main one being the so-called core: this is where the calculations are actually performed. A core can only run one operation at a time.

This is of course a major drawback. For this reason operating systems have developed advanced techniques to give the user the ability to running multiple processes (or threads) at once, especially on graphical environments, even on a single core machine. The most important one is called preemptive multitasking, where preemption is the ability of interrupting a task, switching to another one and then resuming the first task at a later time. This is of course a major drawback. Therefore, operating systems have developed advanced techniques that enable users to run multiple processes (or threads) simultaneously, especially in graphical environments and even on single-core machines. The most important of these is called preemptive multitasking, where preemption is the ability to interrupt one task, switch to another, and then resume the first task at a later time.

So if your CPU has only one core, part of an operating system’s job is to spread that single core computing power across multiple processes or threads, which are executed one after the other in a loop. This operation gives you the illusion of having more than one program running in parallel, or a single program doing multiple things at the same time (if multithreaded). Concurrency is met, but true parallelism — the ability to run processes simultaneously — is still missing. So if your CPU only has one core, part of the operating system’s job is to spread the computing power of a single core across multiple processes or threads that execute one after the other in a loop. This operation gives you the illusion that there are multiple programs running in parallel, or that one program is doing multiple things at the same time (if it is multi-threaded). While concurrency is satisfied, true parallelism—the ability to run processes simultaneously—is still missing.

Today modern CPUs have more than one core under the hood, where each one performs an independent operation at a time. This means that with two or more cores true parallelism is possible. For example, my Intel Core i7 has four cores: it can run four different processes or threads at the same time, simultaneously. Today, modern CPUs have multiple cores, each performing an independent operation at a time. This means true parallelism is possible using two or more cores. For example, my Intel Core i7 has four cores: it can run four different processes or threads simultaneously.

Operating systems are able to detect the number of CPU cores and assign processes or threads to each one of them. A thread may be allocated to whatever core the operating system likes, and this kind of scheduling is completely transparent for the program being run. Additionally, preemptive multitasking might kick in in case all cores are busy. This gives you the ability to run more processes and threads than the actual number or cores available in your machine. The operating system is able to detect the number of CPU cores and assign a process or thread to each CPU core. A thread can be assigned to any core the operating system likes, and this scheduling is completely transparent to the running program. Additionally, preemptive multitasking may come into play when all cores are busy. This enables you to run more processes and threads than the actual number or cores available in your computer.

Multi-threading application on a single core: does it make sense?

Multithreaded applications on a single core: Does it make sense?

True parallelism on a single-core machine is impossible to achieve. Nevertheless it still makes sense to write a multithreaded program, if your application can benefit from it. When a process employs multiple threads, preemptive multitasking can keep the app running even if one of those threads performs a slow or blocking task. It’s impossible to achieve true parallelism on a single-core machine. However, it still makes sense to write multithreaded programs if your application can benefit from it. When a process uses multiple threads, preemptive multitasking can keep the application running even if one of the threads performs a slow or blocking task.

Say for example you are working on a desktop app that reads some data from a very slow disk. If you write the program with just one thread, the whole app would freeze until the disk operation is finished: the CPU power assigned to the only thread is wasted while waiting for the disk to wake up. Of course the operating system is running many other processes besides this one, but your specific application will not be making any progress. For example, you are working on a desktop application that reads some data from a very slow disk. If a program is written using only one thread, the entire application freezes until the disk operation completes: CPU energy allocated to the sole thread is wasted while waiting for the disk to wake up. Of course, besides this process, the operating system has many other processes running, but your particular application won’t make any progress.Let’s rethink your app in a multithreaded way. Thread A is responsible for the disk access, while thread B takes care of the main interface. If thread A gets stuck waiting because the device is slow, thread B can still run the main interface, keeping your program responsive. This is possible because, having two threads, the operating system can switch the CPU resources between them without getting stuck on the slower one. Let’s rethink your application in multi-threaded terms. Thread A is responsible for disk access, while thread B is responsible for the main interface. If thread A is stuck waiting because the device is slow, thread B can still run the main interface, keeping the program responsive. This is possible because there are two threads and the operating system can switch CPU resources between them without getting stuck on the slower thread.

More threads, more problems

The more threads, the more problems

As we know, threads share the same chunk of memory of their parent process. This makes extremely easy for two or more of them to exchange data within the same application. For example: a movie editor might hold a big portion of shared memory containing the video timeline. Such shared memory is being read by several worker threads designated for rendering the movie to a file. They all just need a handle (e.g. a pointer) to that memory area in order to read from it and output rendered frames to disk. We know that threads share the same memory block of their parent process. This makes it very easy for two or more of them to exchange data within the same application. For example: a movie editor might own most of the shared memory containing the video timeline. Such shared memory is read by several worker threads, which are designated for rendering movies to files. They all only require a handle (e.g. a pointer) to a memory area in order to read data from that memory area and output the rendered frames to disk.

Things run smoothly as long as two or more threads read from the same memory location. The troubles kick in when at least one of them writes to the shared memory, while others are reading from it. Two problems can occur at this point: Two or more threads reading from the same memory location can run smoothly. The problem arises when at least one memory is writing to shared memory while others are reading from it. Two problems may arise at this time:

-

data race — while a writer thread modifies the memory, a reader thread might be reading from it. If the writer has not finished its work yet, the reader will get corrupted data; Data race - while the writing thread is modifying memory, the reading thread may be reading the memory. If the author hasn’t done its job, the reader will get corrupted data;

-

race condition — a reader thread is supposed to read only after a writer has written. What if the opposite happens? More subtle than a data race, a race condition is about two or more threads doing their job in an unpredictable order, when in fact the operations should be performed in the proper sequence to be done correctly. Your program can trigger a race condition even if it has been protected against data races. Race condition - the reading thread should only read after the writer has written. What if the opposite happens? More subtle than a data race, a race condition is where two or more threads perform their work in an unpredictable order, when in fact operations should be performed in the correct order to perform correctly. Your program can trigger a race condition even if it is protected from data races.

The concept of thread safety

The concept of thread safety

A piece of code is said to be thread-safe if it works correctly, that is without data races or race conditions, even if many threads are executing it simultaneously. You might have noticed that some programming libraries declare themselves as being thread-safe: if you are writing a multithreaded program you want to make sure that any other third-party function can be used across different threads without triggering concurrency problems. If a piece of code works correctly, i.e. without data races or race conditions, then it is thread-safe, even if many threads execute it simultaneously. You may have noticed that some programming libraries declare themselves to be thread-safe: if you are writing a multi-threaded program, you want to make sure that any other third-party functions can be used across different threads without causing concurrency issues.

The root cause of data races

The root cause of data competition

We know that a CPU core can perform only one machine instruction at a time. Such instruction is said to be atomic because it’s indivisible: it can’t be break into smaller operations. The Greek word “atom” (ἄτομος; atomos) means uncuttable. We know that a CPU core can only execute one machine instruction at a time. Such an instruction is called an atomic instruction because it is indivisible: it cannot be divided into smaller operations. The Greek word “atom” (ἄτομος; atomos) means everything.

The property of being indivisible makes atomic operations thread-safe by nature. When a thread performs an atomic write on shared data, no other thread can read the modification half-complete. Conversely, when a thread performs an atomic read on shared data, it reads the entire value as it appeared at a single moment in time. There is no way for a thread to slip through an atomic operation, thus no data race can happen. The indivisible nature makes atomic operations inherently thread-safe. When one thread performs an atomic write to shared data, no other thread can read the half-completed modifications. In contrast, when a thread performs an atomic read on shared data, it reads the entire value that occurs at some point in time. Threads cannot operate atomically, so data races are not possible.The bad news is that the vast majority of operations out there are non-atomic. Even a trivial assignment like x = 1 on some hardware might be composed of multiple atomic machine instructions, making the assignment itself non-atomic as a whole. So a data race is triggered if a thread reads x while another one performs the assignment. The bad news is that the vast majority of operations are non-atomic. Even a simple assignment like x = 1 on some hardware may consist of multiple atomic machine instructions, making the assignment itself non-atomic as a whole. Therefore, if one thread reads x while another thread performs the assignment, a data race will be triggered.

The root cause of race conditions

Root cause of race conditions

Preemptive multitasking gives the operating system full control over thread management: it can start, stop and pause threads according to advanced scheduling algorithms. You as a programmer cannot control the time or order of execution. In fact, there is no guarantee that a simple code like this: Preemptive multitasking gives the operating system full control over thread management: it can start, stop and pause threads based on advanced scheduling algorithms. As a programmer, you have no control over the timing or order of execution. In fact, there is no guarantee that such a simple code:

writer_thread.start()

reader_thread.start ()

would start the two threads in that specific order. Run this program several times and you will notice how it behaves differently on each run: sometimes the writer thread starts first, sometimes the reader does instead. You will surely hit a race condition if your program needs the writer to always run before the reader. Two threads will be started in a specific order. Run this program multiple times and you’ll notice how it behaves differently on each run: sometimes the writing thread starts first, sometimes the reading thread starts first. If your program requires writers to always run before readers, you’re bound to have a race condition.

This behavior is called non-deterministic: the outcome changes each time and you can’t predict it. Debugging programs affected by a race condition is very annoying because you can’t always reproduce the problem in a controlled way. This behavior is called non-determinism: the result changes every time and you can’t predict it. Debugging a program affected by race conditions is very annoying because you can’t always reproduce the problem in a controlled way.

Teach threads to get along: concurrency control

Teaching Threads to Get Along: Concurrency Control

Both data races and race conditions are real-world problems: some people even died because of them. The art of accommodating two or more concurrent threads is called concurrency control: operating systems and programming languages offer several solutions to take care of it. The most important ones: Data races and race conditions are real-world problems: some people have even died from them. The act of accommodating two or more concurrent threads is called concurrency control: operating systems and programming languages provide various solutions to handle it. The most important thing is:

-

synchronization — a way to ensure that resources will be used by only one thread at a time. Synchronization is about marking specific parts of your code as “protected” so that two or more concurrent threads do not simultaneously execute it, screwing up your shared data; Synchronization – A method of ensuring that only one thread uses a resource at a time. Synchronization is marking a specific part of the code as “protected” so that two or more concurrent threads cannot execute it at the same time, thereby corrupting the shared data;

-

atomic operations — a bunch of non-atomic operations (like the assignment mentioned before) can be turned into atomic ones thanks to special instructions provided by the operating system. This way the shared data is always kept in a valid state, no matter how other threads access it; Atomic operations - Thanks to special instructions provided by the operating system, many non-atomic operations (such as the previously mentioned assignment) can be converted into atomic operations. In this way, no matter how other threads access the shared data, the shared data always remains in a valid state;

-

immutable data — shared data is marked as immutable, nothing can change it: threads are only allowed to read from it, eliminating the root cause. As we know threads can safely read from the same memory location as long as they don’t modify it. This is the main philosophy behind functional programming. Immutable Data - Shared data is marked as immutable, nothing can change it: threads are only allowed to read from it, eliminating the root cause. We know that threads can safely read data from the same memory location as long as they do not modify it. This is the main principle behind functional programming.

I will cover all this fascinating topics in the next episodes of this mini-series about concurrency. Stay tuned! In the next episode of this mini-series on concurrency, I’ll discuss all of these fascinating topics. Please stay tuned!

Original text: https://www.internalpointers.com/post/gentle-introduction-multithreading

读完之后,下一步看什么

如果还想继续了解,可以从下面几个方向接着读。