Top ten classic sorting algorithms

Ten classic sorting algorithms

#Top ten classic sorting algorithms (animation demonstration) From: Damonare’s personal blog

Original text: http://blog.damonare.cn/2016/12/20/十大经典排序算法总结(javascript描述)/

##0. Algorithm overview

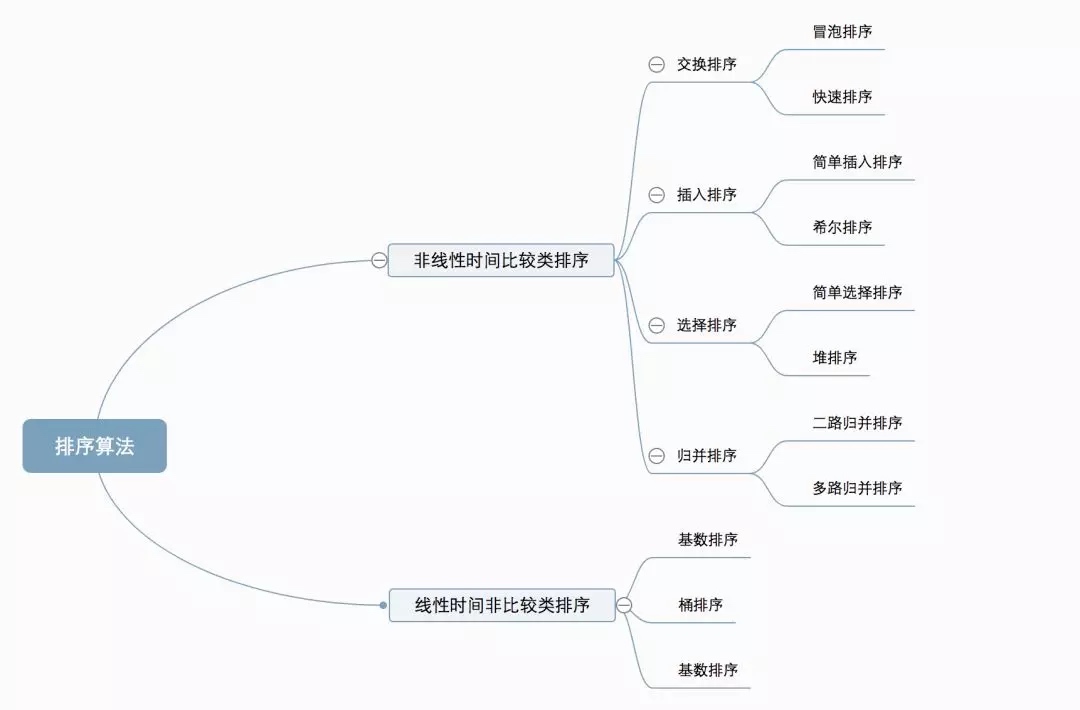

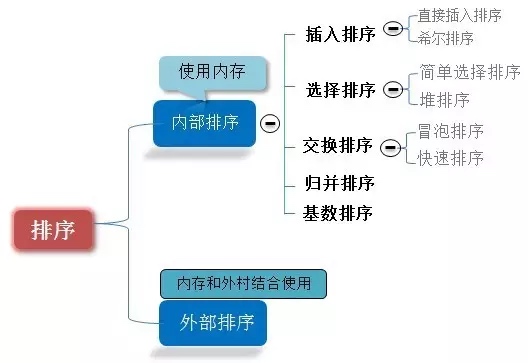

##0.1 Algorithm classification

Ten common sorting algorithms can be divided into two broad categories:

Nonlinear time comparison sorting: Determine the relative order between elements through comparison. Since its time complexity cannot exceed O(nlogn), it is called nonlinear time comparison sorting. Linear time non-comparison sorting: It does not determine the relative order between elements through comparison. It can break through the time lower bound of comparison-based sorting and run in linear time, so it is called linear time non-comparison sorting.

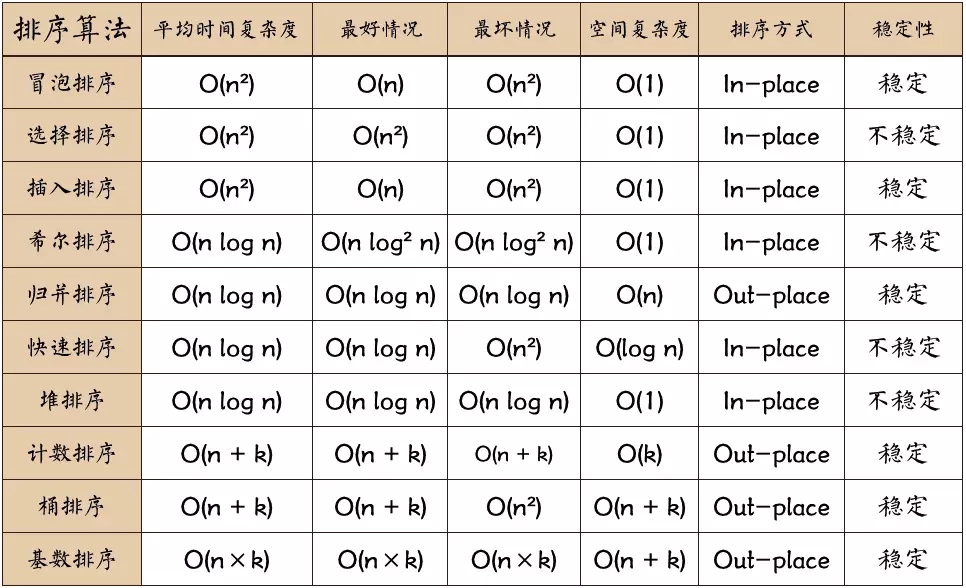

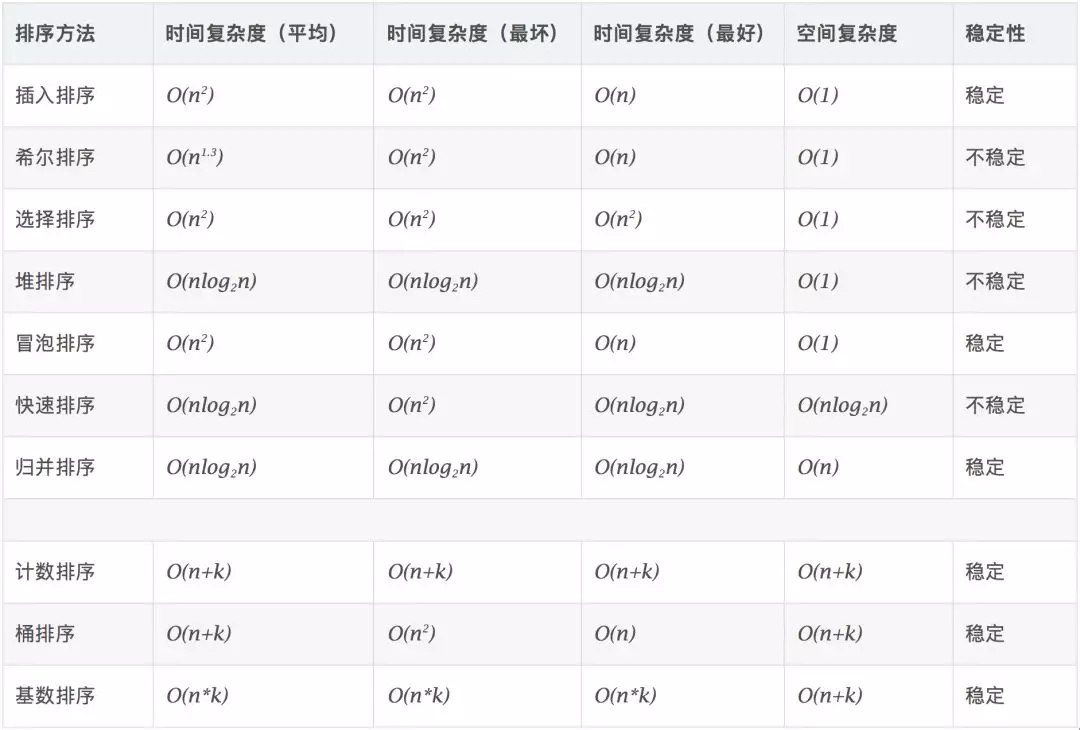

0.2 Algorithm complexity

0.3 Related concepts

Stable: If a is originally in front of b, and a=b, a will still be in front of b after sorting. Unstable: If a is originally in front of b, and a=b, a may appear after b after sorting. Time complexity: the total number of operations on sorted data. Reflects the pattern of the number of operations when n changes. Space complexity: refers to the measurement of the storage space required when an algorithm is executed in a computer. It is also a function of the data size n.

1. Bubble Sort (Bubble Sort)

Bubble sort is a simple sorting algorithm. It repeatedly walks through the sequence to be sorted, comparing elements two at a time and swapping them if they are in the wrong order. The work of visiting the array is repeated until no more exchanges are needed, which means that the array has been sorted. The name of this algorithm comes from the fact that smaller elements will slowly “float” to the top of the array through swapping.

1.1 Algorithm description

-

Compare adjacent elements. If the first is greater than the second, swap them both;

-

Do the same work for each pair of adjacent elements, from the first pair at the beginning to the last pair at the end, so that the last element should be the largest number;

-

Repeat the above steps for all elements except the last one;

-

Repeat steps 1~3 until sorting is completed.

1.2 Animation Demonstration

1.3 Code implementation

function bubbleSort(arr) {

var len = arr.length;

for (var i = 0; i < len - 1; i++) {

for (var j = 0; j < len - 1 - i; j++) {

if (arr[j] > arr[j+1]) { // 相邻元素两两对比

var temp = arr[j+1]; // 元素交换

arr[j+1] = arr[j];

arr[j] = temp;

}

}

}

return arr;

2. Selection Sort

Selection-sort is a simple and intuitive sorting algorithm. How it works: First find the smallest (large) element in the unsorted sequence, store it at the beginning of the sorted sequence, then continue to find the smallest (large) element from the remaining unsorted elements, and then put it at the end of the sorted sequence. And so on until all elements are sorted.

2.1 Algorithm description

Direct selection sorting of n records can obtain ordered results through n-1 direct selection sorting passes. The specific algorithm is described as follows:

- Initial state: The unordered area is R[1…n], and the ordered area is empty;

- When the i-th sorting (i=1,2,3…n-1) starts, the current ordered area and unordered area are R[1…i-1] and R(i…n) respectively. This sorting operation selects the record R[k] with the smallest keyword from the current unordered area, and exchanges it with the first record R in the unordered area, so that R[1…i] and R[i+1…n) become a new ordered area with the number of records increased by 1 and a new unordered area with the number of records reduced by 1 respectively;

- At the end of n-1 passes, the array is sorted.

2.2 Animation Demonstration

2.3 Code implementation

function selectionSort(arr) {

var len = arr.length;

var minIndex, temp;

for (var i = 0; i < len - 1; i++) {

minIndex = i;

for (var j = i + 1; j < len; j++) {

if (arr[j] < arr[minIndex]) { // 寻找最小的数

minIndex = j; // 将最小数的索引保存

}

}

temp = arr[i];

arr[i] = arr[minIndex];

arr[minIndex] = temp;

}

return arr;

}

2.4 Algorithm analysis

One of the most stable sorting algorithms, because no matter what data is entered, the time complexity is O(n2), so when using it, the smaller the data size, the better. The only advantage may be that it does not occupy additional memory space. Theoretically speaking, selection sort may also be the most common sorting method that most people think of when sorting.

- Insertion Sort

The algorithm description of insertion sort (Insertion-Sort) is a simple and intuitive sorting algorithm. It works by constructing an ordered sequence. For unsorted data, it scans from back to front in the sorted sequence, finds the corresponding position and inserts it.

3.1 Algorithm description

Generally speaking, insertion sort is implemented on arrays using in-place. The specific algorithm is described as follows:

- Starting from the first element, the element can be considered to have been sorted;

- Take out the next element and scan from back to front in the sorted element sequence;

- If the element (sorted) is larger than the new element, move the element to the next position;

- Repeat step 3 until you find the position where the sorted element is less than or equal to the new element;

- After inserting the new element into this position;

- Repeat steps 2~5.

3.2 GIF demonstration

3.3 Code implementation

function insertionSort(arr) {

var len = arr.length;

var preIndex, current;

for (var i = 1; i < len; i++) {

preIndex = i - 1;

current = arr[i];

while (preIndex >= 0 && arr[preIndex] > current) {

arr[preIndex + 1] = arr[preIndex];

preIndex--;

}

arr[preIndex + 1] = current;

}

return arr;

}

3.4 Algorithm analysis

In the implementation of insertion sort, in-place sorting is usually used (that is, sorting that only uses O(1) extra space). Therefore, during the scanning process from back to front, the sorted elements need to be repeatedly and gradually shifted backward to provide insertion space for the latest elements.

- Shell Sort

Invented by Shell in 1959, it was the first sorting algorithm to break through O(n2) and was an improved version of simple insertion sort. It differs from insertion sort in that it compares elements that are farther away first. Hill sort is also called reducing increment sort.

4.1 Algorithm description

First, the entire record sequence to be sorted is divided into several subsequences for direct insertion sorting. The specific algorithm is described:

Select an incremental sequence t1, t2,…,tk, where ti>tj, tk=1;

Sort the sequence k times according to the number of incremental sequences k;

In each sorting pass, the column to be sorted is divided into several subsequences of length m according to the corresponding increment ti, and direct insertion sorting is performed on each subtable. Only when the increment factor is 1, the entire sequence is processed as a table, and the length of the table is the length of the entire sequence.

4.2 GIF demonstration

4.3 Code implementation

function shellSort(arr) {

var len = arr.length,

temp,

gap = 1;

while (gap < len / 3) { // 动态定义间隔序列

gap = gap * 3 + 1;

}

for (gap; gap > 0; gap = Math.floor(gap / 3)) {

for (var i = gap; i < len; i++) {

temp = arr[i];

for (var j = i-gap; j > 0 && arr[j]> temp; j-=gap) {

arr[j + gap] = arr[j];

}

arr[j + gap] = temp;

}

}

return arr;

}

4.4 Algorithm analysis

The core of Hill sorting lies in the setting of interval sequence. The interval sequence can be set in advance or dynamically defined. The algorithm for dynamically defining interval sequences was proposed by Robert Sedgewick, co-author of “Algorithms (4th Edition)”.

- Merge Sort

Merge sort is an effective sorting algorithm based on merge operations. This algorithm is a very typical application using the divide and conquer method (Divide and Conquer). Merge the already ordered subsequences to obtain a completely ordered sequence; that is, first make each subsequence orderly, and then make the subsequence segments orderly. If two ordered lists are merged into one ordered list, it is called a 2-way merge.

5.1 Algorithm description

Divide the input sequence of length n into two subsequences of length n/2;

Use merge sorting on these two subsequences;

Merge two sorted subsequences into a final sorted sequence.

5.2 GIF demonstration

5.3 Code implementation

function mergeSort(arr) { // 采用自上而下的递归方法

var len = arr.length;

if (len < 2) {

return arr;

}

var middle = Math.floor(len / 2),

left = arr.slice(0, middle),

right = arr.slice(middle);

return merge(mergeSort(left), mergeSort(right));

}

function merge(left, right) {

var result = [];

while (left.length>0 && right.length>0) {

if (left[0] <= right[0])="" {<="" span="">

result.push(left.shift());

} else {

result.push(right.shift());

}

}

while (left.length)

result.push(left.shift());

while (right.length)

result.push(right.shift());

return result;

}

5.4 Algorithm analysis

Merge sort is a stable sorting method. Like selection sort, the performance of merge sort is not affected by the input data, but its performance is much better than selection sort because the time complexity is always O(nlogn). The price is additional memory space.

- Quick Sort

The basic idea of quick sorting is to separate the records to be sorted into two independent parts through one sorting. If the keywords of one part of the record are smaller than the keywords of the other part, then the two parts of the records can be sorted separately to achieve the ordering of the entire sequence.

6.1 Algorithm description

Quick sort uses the divide-and-conquer method to divide a list into two sub-lists. The specific algorithm is described as follows:

Picking out an element from the sequence is called a “pivot”;

Reorder the array so that all elements smaller than the base value are placed in front of the base, and all elements larger than the base value are placed behind the base (the same number can go to either side). After this partition exits, the base is in the middle of the sequence. This is called a partition operation;

Recursively sort the subarray of elements less than the base value and the subarray of elements greater than the base value.

6.2 GIF demonstration

6.3 Code implementation

function quickSort(arr, left, right) {

var len = arr.length,

partitionIndex,

left = typeof left != 'number' ? 0 : left,

right = typeof right != 'number' ? len - 1 : right;

if (left < right) {

partitionIndex = partition(arr, left, right);

quickSort(arr, left, partitionIndex-1);

quickSort(arr, partitionIndex+1, right);

}

return arr;

}

function partition(arr, left ,right) { // 分区操作

var pivot = left, // 设定基准值(pivot)

index = pivot + 1;

for (var i = index; i <= right;="" i++)="" {<="" span="">

if (arr[i] < arr[pivot]) {

swap(arr, i, index);

index++;

}

}

swap(arr, pivot, index - 1);

return index-1;

}

function swap(arr, i, j) {

var temp = arr[i];

arr[i] = arr[j];

arr[j] = temp;

}

- Heap Sort

Heapsort refers to a sorting algorithm designed using the data structure of a heap. Stacking is a structure that approximates a complete binary tree and satisfies the properties of stacking: that is, the key value or index of a child node is always smaller (or larger) than its parent node.

7.1 Algorithm description

Construct the initial sequence of keywords to be sorted (R1, R2…Rn) into a large top heap, which is the initial unordered area;

Exchange the top element R[1] with the last element R[n], and then get a new unordered area (R1, R2,…Rn-1) and a new ordered area (Rn), and satisfy R[1,2…n-1]<=r[n];< span=“”></=r[n];<>

Since the new top R[1] of the heap after the exchange may violate the properties of the heap, it is necessary to adjust the current disordered area (R1, R2,…Rn-1) into a new heap, and then exchange R[1] with the last element of the disordered area again to obtain a new disordered area (R1, R2…Rn-2) and a new ordered area (Rn-1, Rn). This process is repeated until the number of elements in the ordered area is n-1, then the entire sorting process is completed.

7.2 GIF demonstration

7.3 Code implementation

var len; // 因为声明的多个函数都需要数据长度,所以把len设置成为全局变量

function buildMaxHeap(arr) { // 建立大顶堆

len = arr.length;

for (var i = Math.floor(len/2); i >= 0; i--) {

heapify(arr, i);

}

}

function heapify(arr, i) { // 堆调整

var left = 2 * i + 1,

right = 2 * i + 2,

largest = i;

if (left < len && arr[left] > arr[largest]) {

largest = left;

}

if (right < len && arr[right] > arr[largest]) {

largest = right;

}

if (largest != i) {

swap(arr, i, largest);

heapify(arr, largest);

}

}

function swap(arr, i, j) {

var temp = arr[i];

arr[i] = arr[j];

arr[j] = temp;

}

function heapSort(arr) {

buildMaxHeap(arr);

for (var i = arr.length - 1; i > 0; i--) {

swap(arr, 0, i);

len--;

heapify(arr, 0);

}

return arr;

}

- Counting Sort

Counting sort is not a comparison-based sorting algorithm. Its core lies in converting input data values into keys and storing them in an additionally opened array space. As a linear time complexity sort, counting sort requires that the input data must be integers within a certain range.

8.1 Algorithm description

Find the largest and smallest elements in the array to be sorted;

Count the number of occurrences of each element with value i in the array and store it in the i-th item of array C;

Accumulate all counts (starting from the first element in C, each item is added to the previous item);

Fill the target array in reverse: Place each element i in the C(i)th item of the new array, and subtract 1 from C(i) for each element placed.

8.2 GIF demonstration

8.3 Code implementation

function countingSort(arr, maxValue) {

var bucket = new Array(maxValue + 1),

sortedIndex = 0;

arrLen = arr.length,

bucketLen = maxValue + 1;

for (var i = 0; i < arrLen; i++) {

if (!bucket[arr[i]]) {

bucket[arr[i]] = 0;

}

bucket[arr[i]]++;

}

for (var j = 0; j < bucketLen; j++) {

while(bucket[j] > 0) {

arr[sortedIndex++] = j;

bucket[j]--;

}

}

return arr;

}

8.4 Algorithm Analysis

Counting sort is a stable sorting algorithm. When the input elements are n integers between 0 and k, the time complexity is O(n+k) and the space complexity is also O(n+k). Its sorting speed is faster than any comparison sorting algorithm. Counting sort is a very effective sorting algorithm when k is not very large and the sequences are relatively concentrated.

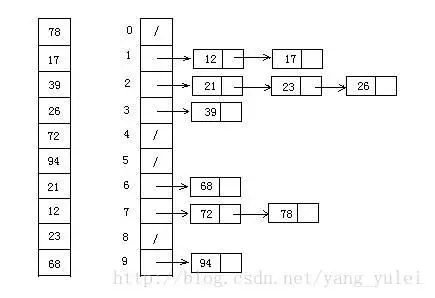

- Bucket Sort

Bucket sort is an upgraded version of counting sort. It makes use of the mapping relationship of functions. The key to efficiency lies in the determination of this mapping function. The working principle of Bucket sort: Assume that the input data obeys a uniform distribution, divide the data into a limited number of buckets, and then sort each bucket separately (it is possible to use other sorting algorithms or continue to use bucket sorting in a recursive manner).

9.1 Algorithm description

Set a quantitative array as an empty bucket;

Traverse the input data and put the data into the corresponding buckets one by one;

Sort each bucket that is not empty;

Concatenate sorted data from buckets that are not empty.9.2 Picture demonstration

9.3 Code implementation

function bucketSort(arr, bucketSize) {

if (arr.length === 0) {

return arr;

}

var i;

var minValue = arr[0];

var maxValue = arr[0];

for (i = 1; i < arr.length; i++) {

if (arr[i] < minValue) {

minValue = arr[i]; // 输入数据的最小值

} else if (arr[i] > maxValue) {

maxValue = arr[i]; // 输入数据的最大值

}

}

// 桶的初始化

var DEFAULT_BUCKET_SIZE = 5; // 设置桶的默认数量为5

bucketSize = bucketSize || DEFAULT_BUCKET_SIZE;

var bucketCount = Math.floor((maxValue - minValue) / bucketSize) + 1;

var buckets = new Array(bucketCount);

for (i = 0; i < buckets.length; i++) {

buckets[i] = [];

}

// 利用映射函数将数据分配到各个桶中

for (i = 0; i < arr.length; i++) {

buckets[Math.floor((arr[i] - minValue) / bucketSize)].push(arr[i]);

}

arr.length = 0;

for (i = 0; i < buckets.length; i++) {

insertionSort(buckets[i]); // 对每个桶进行排序,这里使用了插入排序

for (var j = 0; j < buckets[i].length; j++) {

arr.push(buckets[i][j]);

}

}

return arr;

}

9.4 Algorithm analysis

Bucket sorting uses linear time O(n) in the best case. The time complexity of bucket sorting depends on the time complexity of sorting data between buckets, because the time complexity of other parts is O(n). Obviously, the smaller the buckets are divided, the less data there is between each bucket, and the less time it takes to sort. But the corresponding space consumption will increase.

- Radix Sort

Radix sorting is to sort by low order first, and then collect; then sort by high order, and then collect; and so on, until the highest order. Sometimes some attributes have a priority order, sorted by low priority first, and then by high priority. The final order is that those with higher priority come first, and those with the same high priority and lower priority come first.

10.1 Algorithm description

Get the maximum number in the array and get the number of digits;

arr is the original array, and each bit is taken from the lowest bit to form the radix array;

Perform counting sorting on radix (using the feature of counting sorting suitable for small range numbers);

10.2 GIF demonstration

10.3 Code implementation

// LSD Radix Sort

var counter = [];

function radixSort(arr, maxDigit) {

var mod = 10;

var dev = 1;

for (var i = 0; i < maxDigit; i++, dev *= 10, mod *= 10) {

for(var j = 0; j < arr.length; j++) {

var bucket = parseInt((arr[j] % mod) / dev);

if(counter[bucket]==null) {

counter[bucket] = [];

}

counter[bucket].push(arr[j]);

}

var pos = 0;

for(var j = 0; j < counter.length; j++) {

var value = null;

if(counter[j]!=null) {

while ((value = counter[j].shift()) != null) {

arr[pos++] = value;

}

}

}

}

return arr;

}

10.4 Algorithm Analysis

Radix sorting is based on separate sorting and separate collection, so it is stable. However, the performance of radix sorting is slightly worse than bucket sorting. Each bucket allocation of keywords requires O(n) time complexity, and obtaining a new keyword sequence after allocation requires O(n) time complexity. If the data to be sorted can be divided into d keywords, the time complexity of radix sorting will be O(d*2n). Of course, d is much smaller than n, so it is basically linear.

The space complexity of radix sort is O(n+k), where k is the number of buckets. Generally n >> k, so the extra space needs about n.

读完之后,下一步看什么

如果还想继续了解,可以从下面几个方向接着读。